- en

- fr

This is an old revision of the document!

Table of Contents

Forwarding performance lab of Netgate RCC-VE 4860

Forwarding performance lab of a quad core Intel Atom C2558E (2.40GHz) with 2+4 Gigabit Intel NIC

Hardware detail

This lab will test a Netgate RCC-VE 4860 (dmesg):

- Quad cores Intel Atom C2558 (2.40GHz)

- 2 Gigabit Intel i211

- 4 Gigabit Intel i350

- 8Gb of RAM

Lab set-up

For more information about full setup of this lab: Setting up a forwarding performance benchmark lab (switch configuration, etc.).

Diagram

+------------------------------------------+ +-----------------------+ | Device under Test | | Packet gen | | igb2: 198.18.0.209/24 |<===| igb2: 198.18.0.203 | | 2001:2::209/64 | | 2001:2::203/64 | | (00:08:a2:09:33:da) | | (00:1b:21:c4:95:7a) | | | | | | igb3: 198.19.0.209/24 | | igb3: 198.19.0.203 | | 2001:2:0:8000::209/64 | | 2001:2:0:8000::203/64 | | (00:08:a2:09:33:db) |===>| (00:1b:21:c4:95:7b) | | | | | | static routes | | | | 198.19.0.0/16 => 198.19.0.203 | +-----------------------+ | 198.18.0.0/16 => 198.18.0.203 | | 2001:2::/49 => 2001:2::203 | | 2001:2:0:8000::/49 => 2001:2:0:8000::203 | | | | static arp and ndp | | 198.18.0.203 => 00:1b:21:c4:95:7a | | 2001:2::203 | | | | 198.19.0.203 => 00:1b:21:c4:95:7b | | 2001:2:0:8000::203 | | | | | +------------------------------------------+

This device use 2 kinds of Intel NIC:

- igb0 and igb1: Intel i211 with 2 queues, should be used for admin purpose

- igb2 to igb5: Intel i350 with 4 queues (and iPXE support) should be used for forwarding/firewalling purpose

The generator MUST generate lot's of IP flows (multiple source/destination IP addresses and/or UDP src/dst port) and minimum packet size (for generating maximum packet rate) with one of these commands:

Multiple source/destination IP addresses (don't forget to precise UDP port to use for avoiding using number 0 filtered by pf):

pkt-gen -i igb2 -f tx -n 80000000 -l 60 -d 198.19.10.1:2000-198.19.10.20 -D 00:08:a2:09:33:da -s 198.18.10.1:2000-198.18.10.100 -S 00:1b:21:c4:95:7a -w 4 -U

And the same with IPv6 flows (minimum frame size of 62 for having a correct empty UDP packet):

pkt-gen -f tx -i igb2 -n 1000000000 -l 62 -6 -d "[2001:2:0:8001::1]-[2001:2:0:8001::64]" -D 00:08:a2:09:33:da -s "[2001:2:0:1::1]-[2001:2:0:1::14]" -S 00:1b:21:c4:95:7a -w 4 -U

Receiver will use these commands:

pkt-gen -i igb3 -f rx -w 4

Basic configuration and tuning

Disabling Ethernet flow-control

First, disable Ethernet flow-control:

echo "dev.igb.2.fc=0" >> /etc/sysctl.conf echo "dev.igb.3.fc=0" >> /etc/sysctl.conf service sysctl restart

Enabling Tx abdicate (iflib drivers)

Second, if NIC drivers is iflib based you should enable TX abdicate (automatically done with BSDRP but not on a generic FreeBSD):

cat <<EOF >> /etc/sysctl.conf # Enabling Tx abdicate dev.igb.0.iflib.tx_abdicate=1 dev.igb.1.iflib.tx_abdicate=1 dev.igb.2.iflib.tx_abdicate=1 dev.igb.3.iflib.tx_abdicate=1 dev.igb.4.iflib.tx_abdicate=1 dev.igb.5.iflib.tx_abdicate=1 EOF service sysctl restart

Disabling ICMP redirect

ICMP redirect is enabled by default on a generic FreeBSD (it's disabled by default on BSDRP), and this feature disable the fast tryforward code path.

cat <<EOF >> /etc/sysctl.conf # Enabling fastforwarding by disabling ICMP redirect net.inet.ip.redirect=0 net.inet6.ip6.redirect=0 EOF service sysctl restart

IP Configuration

Configure IP addresses, static ARP entries and static routes.

A router should not use LRO and TSO. BSDRP disable by default using a RC script (disablelrotso_enable=“YES” in /etc/rc.conf.misc).

# IPv4 router gateway_enable="YES" ifconfig_igb2="inet 198.18.0.209/25 -tso4 -tso6 -lro" ifconfig_igb3="inet 198.19.0.209/25 -tso4 -tso6 -lro" static_routes="generator receiver" route_generator="-net 198.18.0.0/16 198.18.0.203" route_receiver="-net 198.19.0.0/16 198.19.0.203" static_arp_pairs="receiver generator" static_arp_generator="198.18.0.203 00:1b:21:c4:95:7a" static_arp_receiver="198.19.0.203 00:1b:21:c4:95:7b" # IPv6 router ipv6_gateway_enable="YES" ipv6_activate_all_interfaces="YES" ifconfig_igb2_ipv6="inet6 2001:2::209 prefixlen 64" ifconfig_igb3_ipv6="inet6 2001:2:0:8000::209 prefixlen 64" ipv6_static_routes="generator receiver" ipv6_route_generator="2001:2:: -prefixlen 49 2001:2::203" ipv6_route_receiver="2001:2:0:8000:: -prefixlen 49 2001:2:0:8000::203" static_ndp_pairs="generator receiver" static_ndp_generator="2001:2::203 00:1b:21:c4:95:7a" static_ndp_receiver="2001:2:0:8000::203 00:1b:21:c4:95:7b"

forwarding rate

We start the first test by starting one packet generator at gigabit line-rate (1.488Mpps) the pkt-gen receiver output:

378.931863 main_thread [2514] 1.126 Mpps (1.127 Mpkts 541.181 Mbps in 1001661 usec) 10.97 avg_batch 1014 min_space 379.933862 main_thread [2514] 1.124 Mpps (1.126 Mpkts 540.628 Mbps in 1002000 usec) 10.79 avg_batch 1015 min_space 380.934863 main_thread [2514] 1.122 Mpps (1.123 Mpkts 539.050 Mbps in 1001000 usec) 10.87 avg_batch 1015 min_space 381.936861 main_thread [2514] 1.121 Mpps (1.123 Mpkts 539.214 Mbps in 1001999 usec) 10.94 avg_batch 1015 min_space 382.938075 main_thread [2514] 1.122 Mpps (1.123 Mpkts 539.200 Mbps in 1001213 usec) 11.11 avg_batch 1015 min_space 383.938862 main_thread [2514] 1.121 Mpps (1.122 Mpkts 538.636 Mbps in 1000788 usec) 11.26 avg_batch 1014 min_space 384.940861 main_thread [2514] 1.121 Mpps (1.123 Mpkts 539.078 Mbps in 1001999 usec) 11.40 avg_batch 1015 min_space 385.979862 main_thread [2514] 1.121 Mpps (1.165 Mpkts 558.969 Mbps in 1039001 usec) 11.38 avg_batch 1014 min_space 386.980862 main_thread [2514] 1.121 Mpps (1.122 Mpkts 538.379 Mbps in 1000999 usec) 11.69 avg_batch 1015 min_space 387.982862 main_thread [2514] 1.121 Mpps (1.123 Mpkts 539.269 Mbps in 1002001 usec) 12.01 avg_batch 1015 min_space 388.983863 main_thread [2514] 1.120 Mpps (1.121 Mpkts 538.263 Mbps in 1001000 usec) 12.33 avg_batch 1016 min_space 389.998864 main_thread [2514] 1.121 Mpps (1.138 Mpkts 546.116 Mbps in 1015001 usec) 11.92 avg_batch 1014 min_space

It's able to forward at about about 1.12 Mpps.

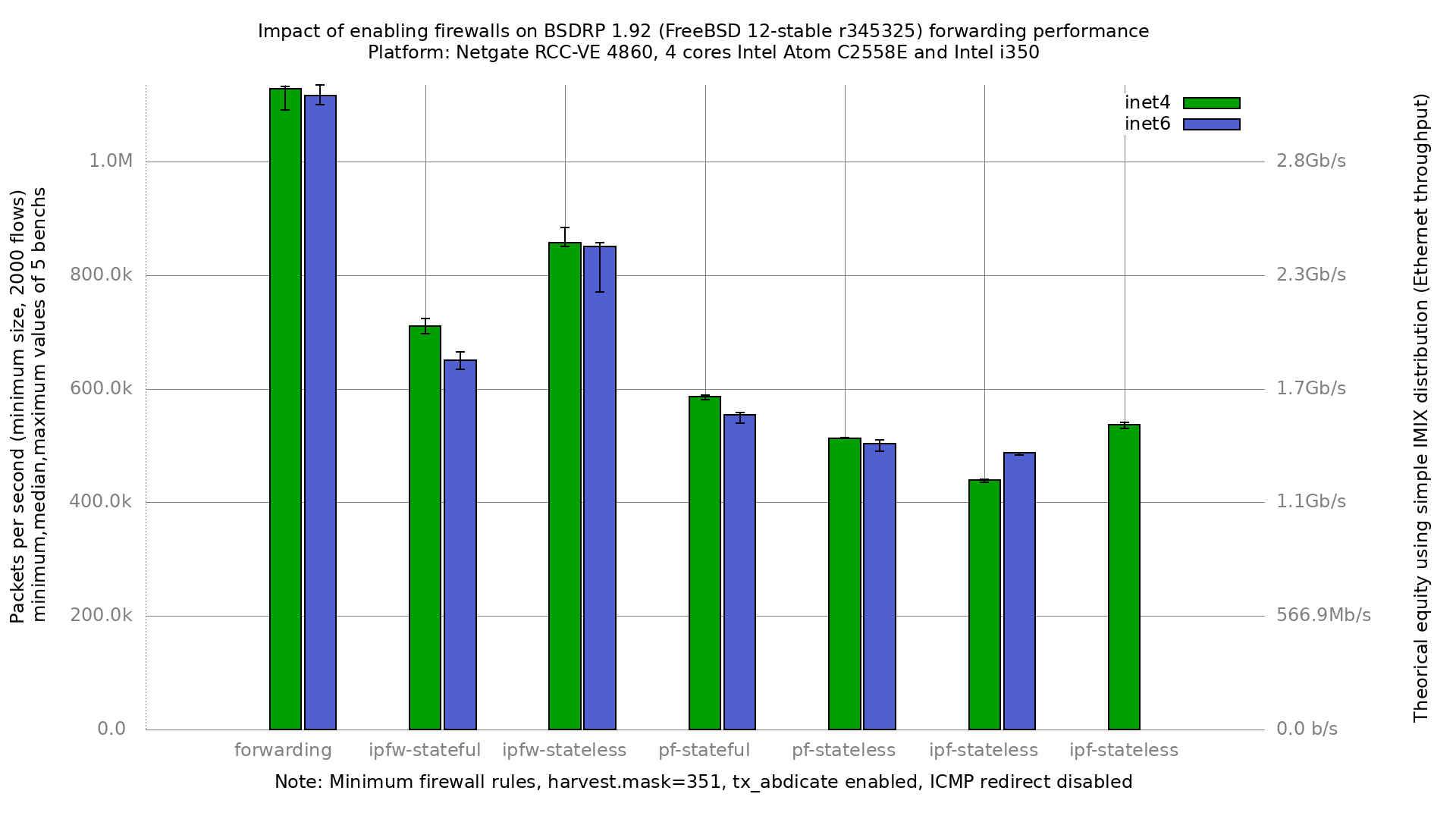

Firewalls impact

This test will generate 2000 different flows by using a mix of 20 different sources IP and 100 different destination IP.

Graph

scale information about Gigabit Ethernet:

- 1.488Mpps is the maximum paquet-per-second (pps) rate with smallest 46 bytes packets.

- 81Kpps is the minimum pps rate with biggest 1500 bytes packets.

Netmap performance

pkt-gen

Intel NIC drivers are netmap ready… what's about the rate with the netmap's packet generator/receiver ?

As a receiver (the sender is emitting at 1.48 Mpps):

[root@BSDRP]~# pkt-gen -i igb2 -f rx -w 4 186.632724 main [1705] interface is igb2 186.632937 extract_ip_range [289] range is 10.0.0.1:0 to 10.0.0.1:0 186.632963 extract_ip_range [289] range is 10.1.0.1:0 to 10.1.0.1:0 186.957406 main [1896] mapped 334980KB at 0x801dff000 Receiving from netmap:igb2: 4 queues, 1 threads and 1 cpus. 186.957504 main [1982] Wait 4 secs for phy reset 190.958543 main [1984] Ready... 190.958678 nm_open [456] overriding ifname igb2 ringid 0x0 flags 0x1 190.958863 receiver_body [1238] reading from netmap:igb2 fd 4 main_fd 3 191.960540 main_thread [1502] 0 pps (0 pkts in 1001704 usec) 192.022046 receiver_body [1245] waiting for initial packets, poll returns 0 0 192.962539 main_thread [1502] 0 pps (0 pkts in 1001999 usec) 193.040557 receiver_body [1245] waiting for initial packets, poll returns 0 0 193.964552 main_thread [1502] 329506 pps (330169 pkts in 1002013 usec) 194.966546 main_thread [1502] 1488135 pps (1491104 pkts in 1001995 usec) 195.968552 main_thread [1502] 1488144 pps (1491128 pkts in 1002005 usec) 196.970547 main_thread [1502] 1488091 pps (1491060 pkts in 1001995 usec) 197.972545 main_thread [1502] 1488169 pps (1491142 pkts in 1001998 usec) 198.974550 main_thread [1502] 1488141 pps (1491125 pkts in 1002005 usec) 199.976507 main_thread [1502] 1488138 pps (1491052 pkts in 1001958 usec) 200.978546 main_thread [1502] 1488118 pps (1491151 pkts in 1002038 usec) 201.980545 main_thread [1502] 1488143 pps (1491118 pkts in 1001999 usec) 202.982551 main_thread [1502] 1488145 pps (1491130 pkts in 1002006 usec) 203.984386 main_thread [1502] 1488136 pps (1490867 pkts in 1001835 usec) 204.985545 main_thread [1502] 1488137 pps (1489862 pkts in 1001159 usec) 205.987544 main_thread [1502] 1488135 pps (1491110 pkts in 1001999 usec) 206.989545 main_thread [1502] 1488113 pps (1491091 pkts in 1002001 usec) 207.991543 main_thread [1502] 1488143 pps (1491116 pkts in 1001998 usec) 208.993545 main_thread [1502] 1488147 pps (1491126 pkts in 1002002 usec) 209.994551 main_thread [1502] 1488135 pps (1489632 pkts in 1001006 usec) 210.995544 main_thread [1502] 1488116 pps (1489595 pkts in 1000994 usec) 211.997543 main_thread [1502] 1488145 pps (1491120 pkts in 1001999 usec) 212.998542 main_thread [1502] 1488153 pps (1489638 pkts in 1000998 usec)

It support the maximum Ethernet frame rate (1.48Mpps) with netmap as a receiver.

As a packet generator:

[root@BSDRP]~# pkt-gen -i igb2 -f tx -w 4 -c 2 -n 80000000 -l 60 -d 2.1.3.1-2.1.3.20 -D 00:1b:21:d4:3f:2a -s 1.1.3.3-1.1.3.100 550.392443 main [1705] interface is igb2 550.392739 extract_ip_range [289] range is 1.1.3.3:0 to 1.1.3.100:0 550.392771 extract_ip_range [289] range is 2.1.3.1:0 to 2.1.3.20:0 550.717236 main [1896] mapped 334980KB at 0x801dff000 Sending on netmap:igb2: 4 queues, 1 threads and 2 cpus. 1.1.3.3 -> 2.1.3.1 (00:00:00:00:00:00 -> 00:1b:21:d4:3f:2a) 550.717342 main [1958] --- SPECIAL OPTIONS: copy 550.717352 main [1980] Sending 512 packets every 0.000000000 s 550.717361 main [1982] Wait 4 secs for phy reset 554.743524 main [1984] Ready... 554.743659 nm_open [456] overriding ifname igb2 ringid 0x0 flags 0x1 554.743854 sender_body [1064] start, fd 4 main_fd 3 555.753521 main_thread [1502] 1466573 pps (1480787 pkts in 1009692 usec) 556.754522 main_thread [1502] 1488145 pps (1489635 pkts in 1001001 usec) 557.756031 main_thread [1502] 1488145 pps (1490391 pkts in 1001509 usec) 558.756528 main_thread [1502] 1488112 pps (1488852 pkts in 1000497 usec) 559.757526 main_thread [1502] 1488130 pps (1489615 pkts in 1000998 usec) 560.759520 main_thread [1502] 1488142 pps (1491109 pkts in 1001994 usec) 561.761527 main_thread [1502] 1488130 pps (1491105 pkts in 1001999 usec) 562.763520 main_thread [1502] 1488135 pps (1491113 pkts in 1002001 usec) 563.764527 main_thread [1502] 1488119 pps (1489619 pkts in 1001008 usec) 564.766519 main_thread [1502] 1488164 pps (1491127 pkts in 1001991 usec) 565.768520 main_thread [1502] 1488116 pps (1491094 pkts in 1002001 usec) 566.770520 main_thread [1502] 1488151 pps (1491127 pkts in 1002000 usec) 567.771520 main_thread [1502] 1488112 pps (1489600 pkts in 1001000 usec) 568.773518 main_thread [1502] 1488140 pps (1491113 pkts in 1001998 usec) ^C568.885112 sigint_h [326] received control-C on thread 0x801806800 568.885184 sender_body [1162] flush tail 350 head 350 on thread 0x801806800 568.885222 sender_body [1170] pending tx tail 628 head 582 on ring 0 568.885280 sender_body [1170] pending tx tail 637 head 582 on ring 0 568.885315 sender_body [1170] pending tx tail 659 head 582 on ring 0 568.885393 sender_body [1170] pending tx tail 685 head 582 on ring 0 568.885450 sender_body [1170] pending tx tail 709 head 582 on ring 0 569.775515 main_thread [1502] 165737 pps (166068 pkts in 1001997 usec) Sent 21022355 packets, 60 bytes each, in 14.15 seconds. Speed: 1.49 Mpps Bandwidth: 713.30 Mbps (raw 998.62 Mbps)

No problem in this direction too: Gigabit line-rate is a piece of cake with netmap on this platform.